Robust Sim2Real Transfer with the da Vinci Research Kit:

A Study On Camera, Lighting, and Physics Domain Randomization

Mustafa Haiderbhai, Radian Gondokaryono, Thomas Looi, James M. Drake, Lueder A. Kahrs

Medical Computer Vision and Robotics Lab, The Wilfred and Joyce Posluns CIGITI @ SickKids, University of Toronto

Abstract

Autonomous surgical robotics is a growing area of research, with advances being made in the areas of vision and control. Central to this research is the need for simulations to facilitate data collection and simulate learning environments for Reinforcement Learning (RL) agents. Recent simulators have facilitated RL policy generation, but lack a robust sim2real pipeline and a proven vision-based policy that can use any type of camera including the da Vinci Surgical System (dVSS) Endoscope.

To solve this, we build a ROS-based sim2real pipeline that incorporates a Unity3D da Vinci Research Kit (dVRK) simulation, modular kinematics, and shared interfaces. We examine the vision-based task of cube pushing, and train RL policies to execute in real life through Domain Randomization. Our experiments evaluate model success in simulation and two camera systems: OAK-1 and the dVSS Endoscope. Our results indicate that Domain Randomization is effective at bridging the sim2real gap, and even extends to the difficult endoscope scenario.

We achieve 100% transfer success rate on both OAK-1 and the dVSS Endoscope, with gains of over 60% compared to a base model with no Domain Randomization. We examine the various randomization parameters, including lighting, camera, and physics variables, and determine that all parameters play a significant role in bridging the sim2real gap. Testing across extreme lighting and camera configurations not seen in simulation, our models continue to perform well, with 85% accuracy on the OAK-1 camera. Our future work will extend to other tasks and more complex policies to take advantage of stereo-camera imaging.

The Sim2Real Gap

Models trained purely in simulation will show 100% success rate inside the simulation, but will fail when tranferred to an equivelant real life setup.

Simulation

SUCCESS

Regular (Oak-1) Camera

FAILURE

da Vinci Endoscope

FAILURE

Novel Simulation

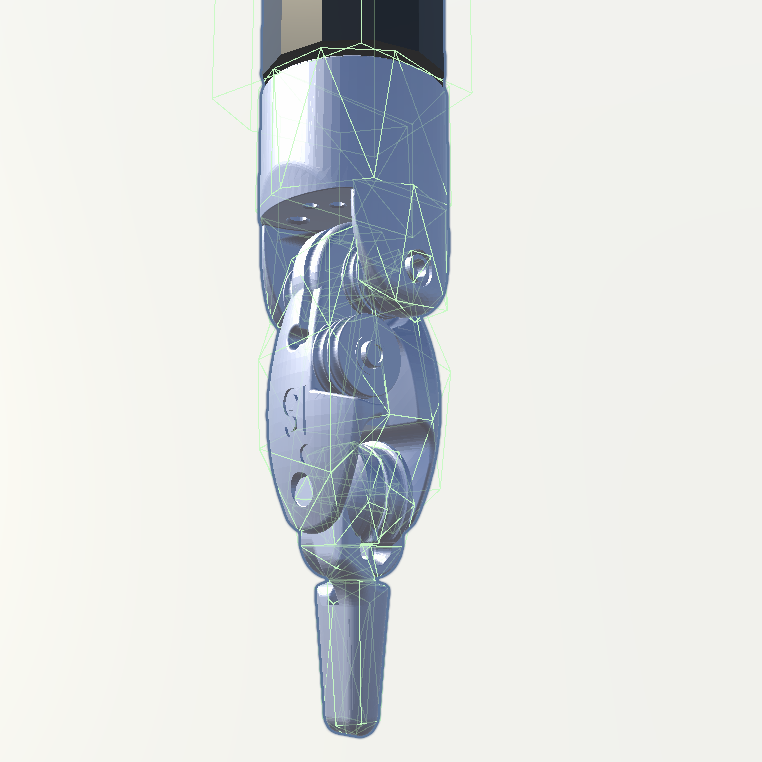

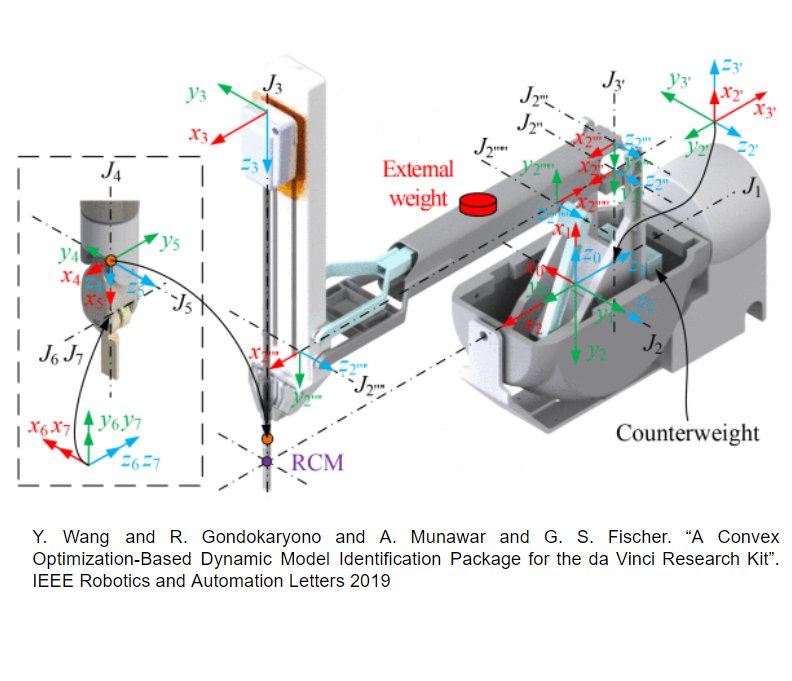

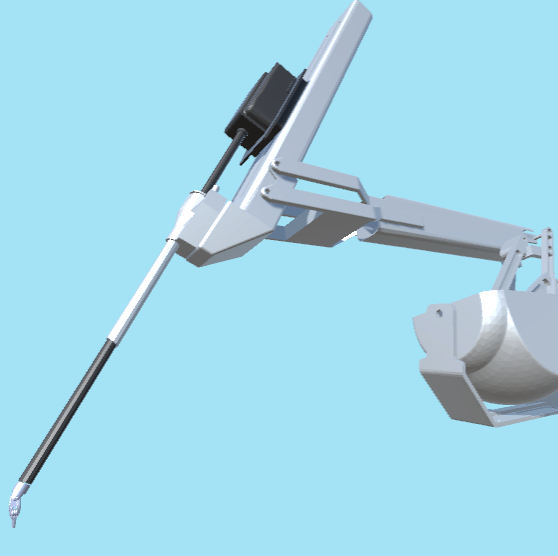

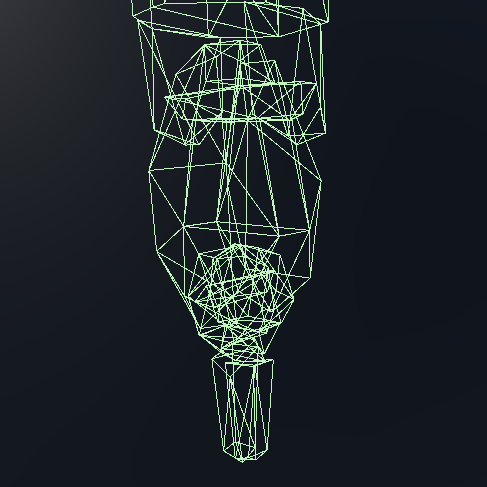

To accomplish successful sim2real transfer, we build a novel simulator with modern and accurate dVRK physics. Our simulation is built on the Unity3D game engine, backed by nVidia PhysX physics, and modern rendering capabilities.

dVRK Modeling

Dynamics

Modular Kinematics

Custom Collision Meshes

Sim2Real Pipeline

Our Sim2Real pipeline builds off of our concurrent work in creating a modular robotics control framework. The modularity of our framework as well as a modular kinematics solver allows us to flexibly output to any modality, and accept input from any source. The entire pipeline can easily be swapped between simulation and real components at any step, providing easy and seamless sim2real testing and execution.

Domain Randomization

We employ Domain Randomization of various parameters to train robust agents including:

Light Direction/Intensity

Camera Pose

Cube Mass/Scale/Friction

After Domain Randomization

With sufficient domain randomization during simulated training, the sim2real gap can be overcome robustly.

Standard

Extreme Lighting Scenario

Extreme Camera Perspective

da Vinci Endoscope

Bridging the sim2real gap robustly allows us to execute with the da Vinci Endoscope, which is often ignored in autonomous surgical robotics in favour of commercial cameras not meant for surgical applications. The da Vinci endoscope has it's own light source and the narrow focused light and noisy image create a large gap for traditionally trained agents.

BibTeX Citation

@INPROCEEDINGS{haiderbhai2022dvrksim2real, author={Haiderbhai, Mustafa and Gondokaryono, Radian and Looi, Thomas and Drake, James M. and Kahrs, Lueder A.}, booktitle={2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)}, title={Robust Sim2Real Transfer with the da Vinci Research Kit: A Study On Camera, Lighting, and Physics Domain Randomization}, year={2022}, pages={3429-3435}, doi={10.1109/IROS47612.2022.9981573}}